The people in our R&D team have different backgrounds. We have business backgrounds in marketing, software development, and economics. We have academic backgrounds in mathematics, geography, physics, systems biology, and more. And we work with different tools such as .NET, Python, and R.

We might imagine that these differences could lead to misunderstandings and duplication of effort. But overall the variety in our approaches to problems improves the speed and quality of our work.

Developing the similar companies score

One example is the new similar companies feature that we recently released as ALPHA in our live products.

The challenge was to calculate the similarity of all pairs of companies for which we have websites in our database. That’s an enormous 1.6 million squared (2.5 trillion) document comparisons.

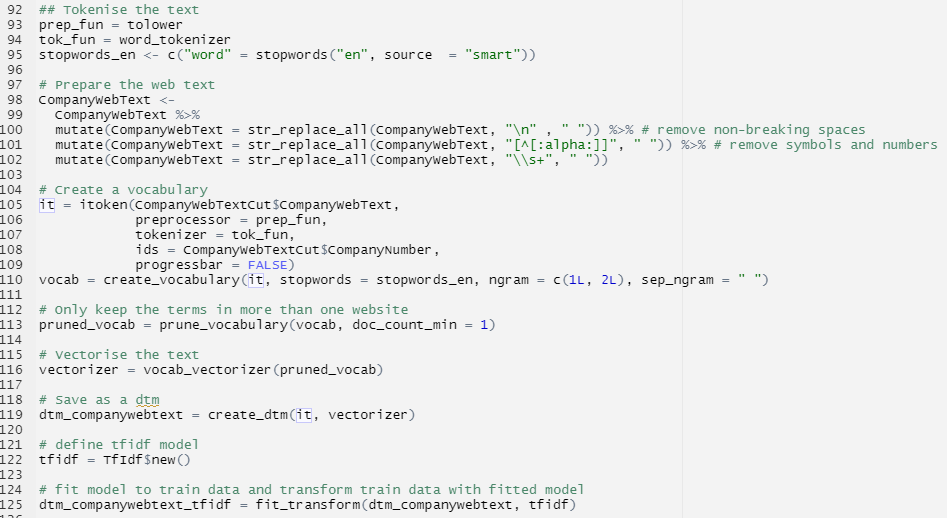

First we needed to find the best way to calculate similarity between two companies. For this we used R. It lets us rapidly and flexibly prototype and compare hundreds of different methods and parameters for each method. R Markdown makes it simple to explain our working and produce graphs and documents so that we can discuss the best approach to take as a team.

Implementing the similar companies score

While R is great for rapid prototyping and discussion, it seem less good at performing computation at scale. And with 2.5 trillion calculations to do, we need scale.

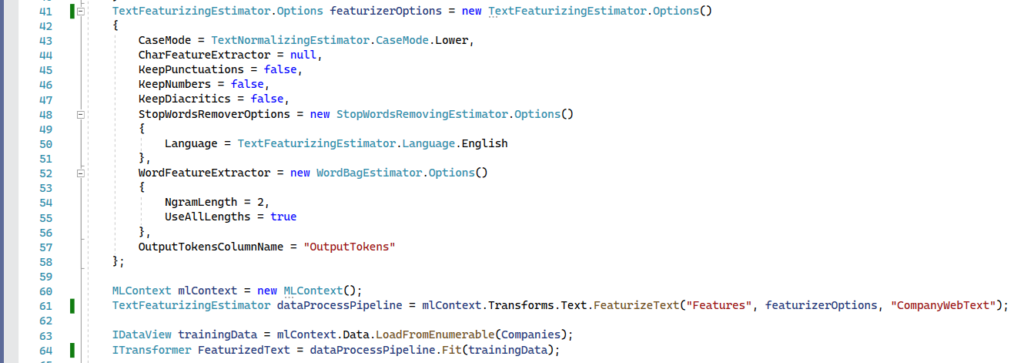

In recent years a common toolset of natural language processing (NLP) and machine-learning (ML) has matured in all major programming languages and frameworks. This makes it quick and easy to reimplement a proven R process in another language and framework. In this case, C# on .NET.

Though it seems counter-intuitive, this duplication of work speeds up our development of new features. We gain the advantages of R while prototyping. And we quickly produce data and documents for discussions that combine all of our team’s experience and prepare benchmarks to judge our final implementations against.

Then we are able to use the power of C# and .NET to run code in parallel, manage memory better, and access low-level accelerations such as CPU Vector Extensions (AVX-512) and GPU computation (Cuda). In .NET we regularly decrease calculation times by a factor of 1000 compared to prototypes in R.

Your experience of our product

The scores that power our new similar companies feature were calculated over two days on an 8-core Intel CPU and a GeForce 1080 GPU. Like the machine-learning and data processing that you experience on our platform today the code is written in C# on .NET.

We think that this guarantees the highest reliability, the best performance, the fewest security risks, and the easiest deployment. But we are aware of the disadvantages of this approach. So increasingly we develop the algorithms we deploy first in R or Python. And we use those languages to continually check the quality of our data in production and develop improvements.